Images: Seven Forms

Final Performance: Seven Forms

Seven Forms – a remote-controlled Pinocchio simulation

Short documentation (2 min)

Full documentation (10 min)

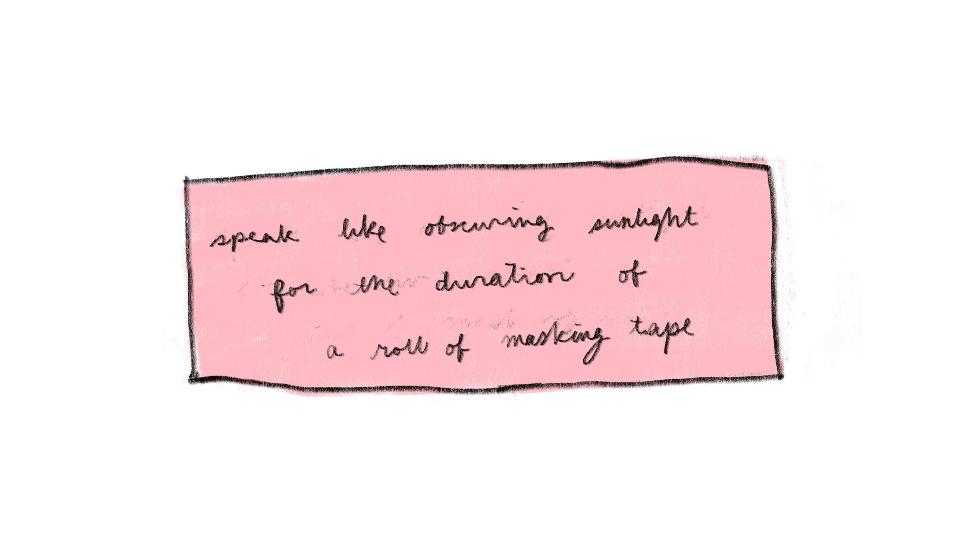

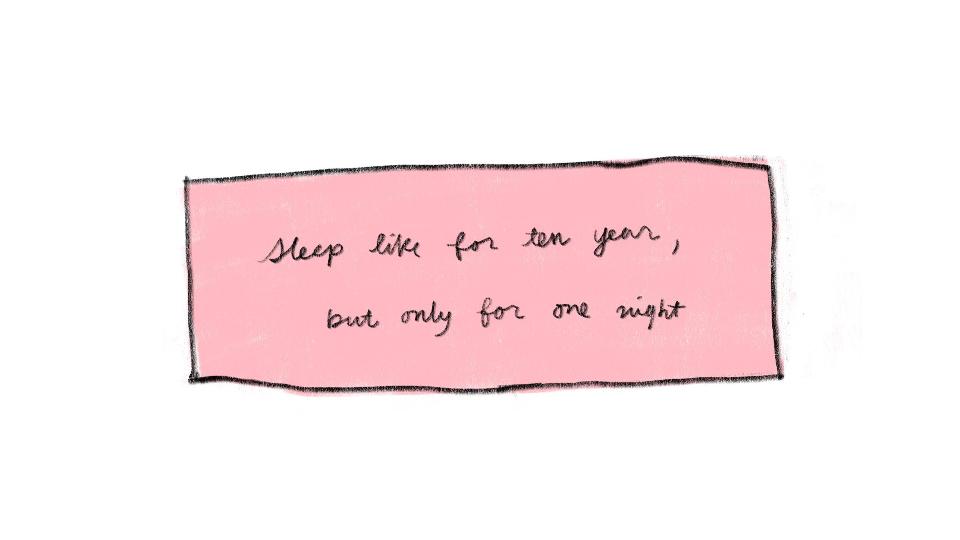

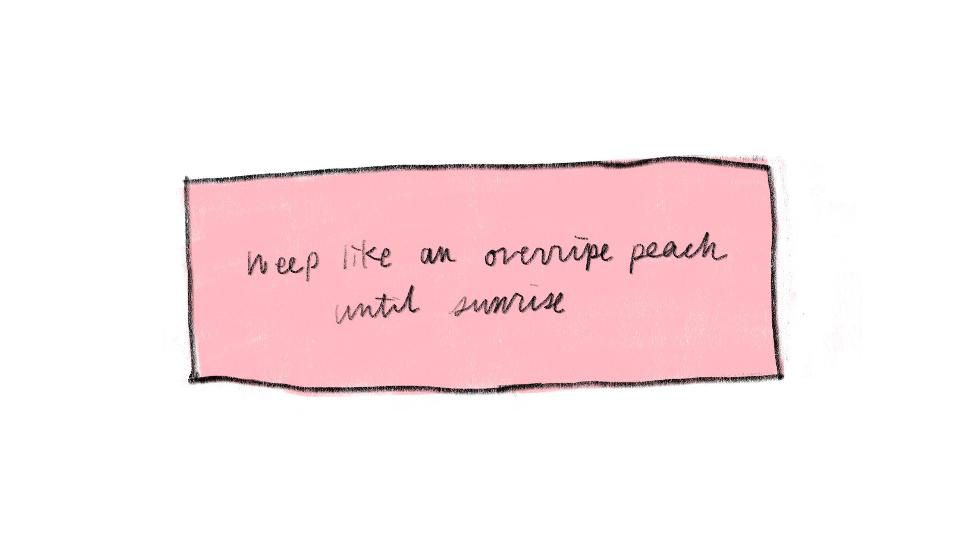

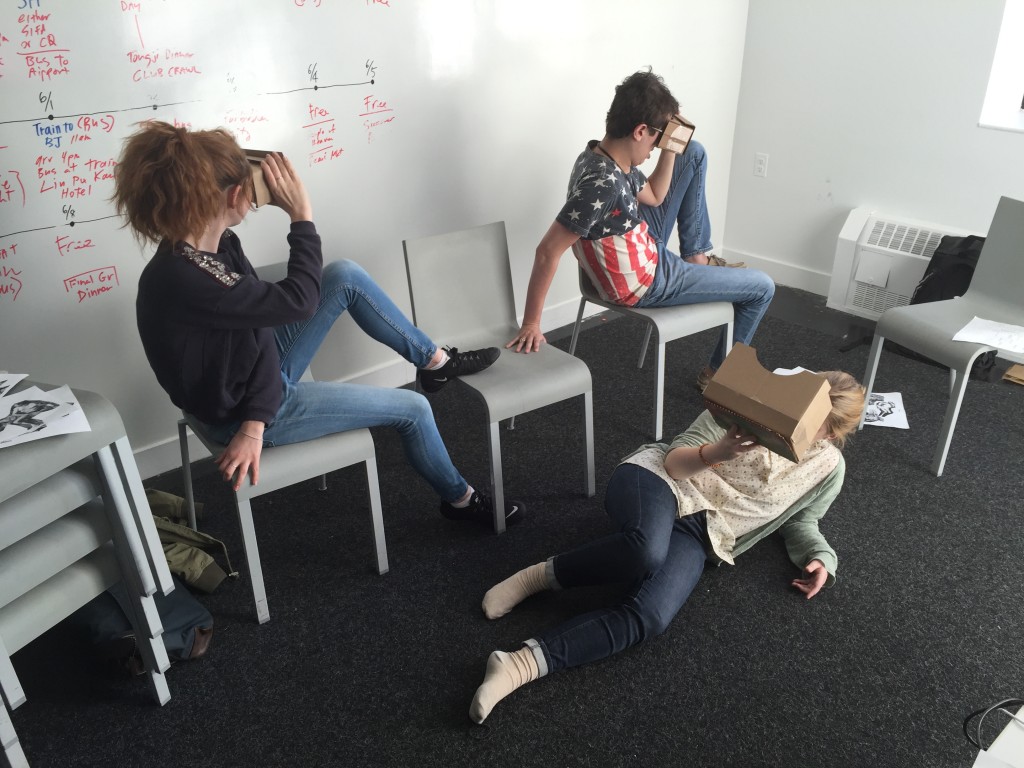

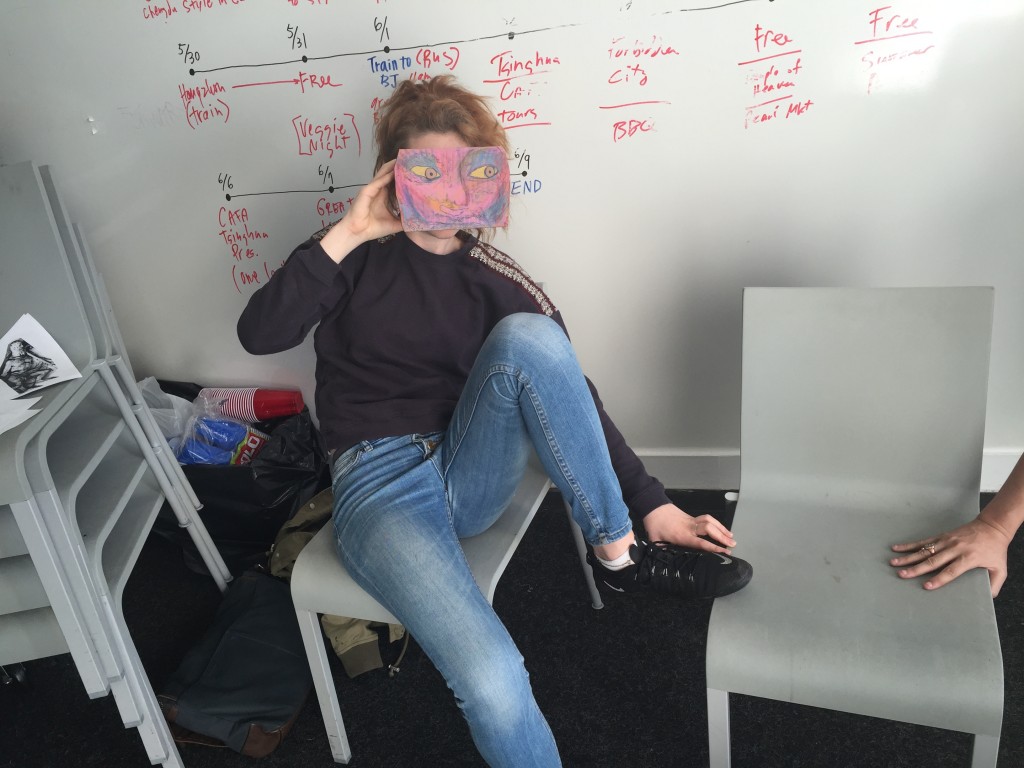

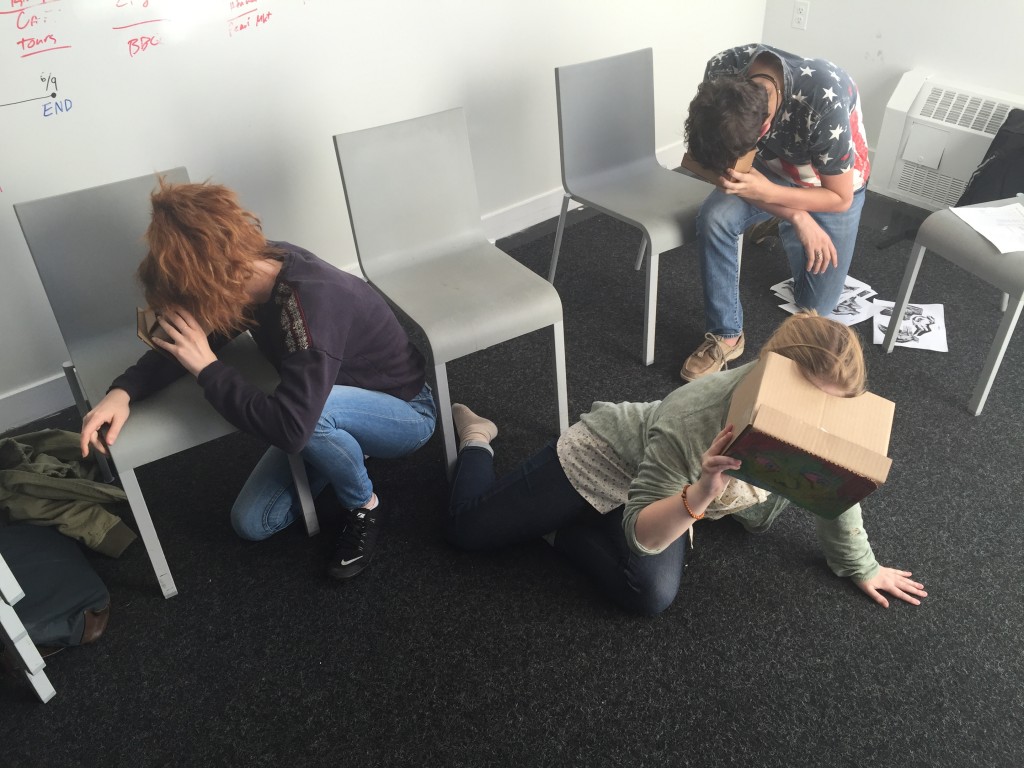

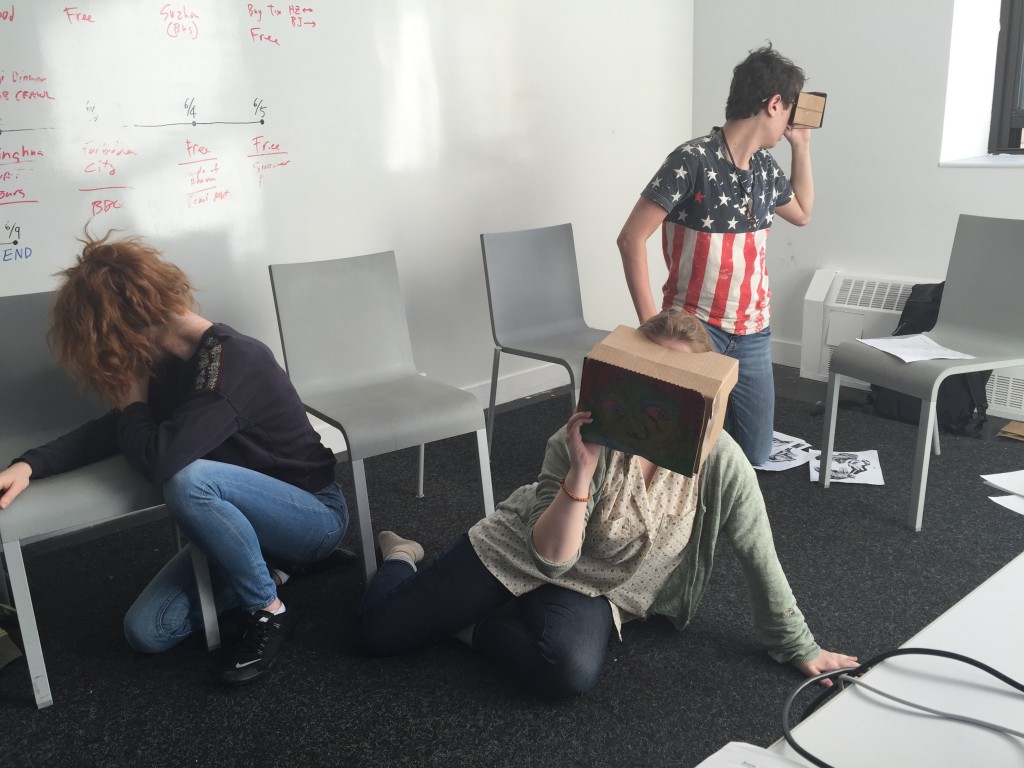

My final piece “Seven Forms” is a short performance in three scenes with three performers. It is based on the myth of Io and Jupiter as told by Ovid, as well as Disney’s Pinocchio-movie from 1940. The three performers each wear a Virtual Reality (VR) headset. They are not able to see anything outside of the VR space. In the VR space, content is made available to the performers on cue, remote-controlled. The content of the VR space consist of lines which the performers read, and images they remake. The performers must read the lines and try to remake the images as soon as they see them. They are not allowed to learn anything by heart.

I am primarily trying to accomplish two goals with this piece. The larger goal is to explore new strategies for staging performances. By this I mean directing performers, creating stage objects, making new lighting techniques etc., but most importantly how to use the newest technology as a way to bring performance into unexplored territory. Performing, then, can become a sort of research: If we use this particular technology in this particular way, where will it lead us?

Another goal of mine with this project is to explore versions as a concept. This comes from my ongoing interest in digital archiving and digital compression. I am interested in how digital compression of images work; how small, almost invisible changes in compression can have big effects when done over and over again. I am interested in how to keep track of these changes, and how to work with different versions of the same thing, both practically and theoretically. The exploration of small changes, large changes and sudden changes is fascinating to me. Therefore this piece deals thematically with metamorphosis and versioning.

Versioning

I am using the myth of Jupiter’s rape of Io and the Disney Pinocchio-movie as the framework for the entire piece. Told extremely briefly, the myth as told by Ovid is the following: Jupiter (the Roman God) tricks Io, the young nymph, into a forest, where he rapes her. When Jupiter’s wife Juno becomes suspicious, Jupiter, in order to hide his misdeed, transforms Io into a heifer. Juno keeps Io for a while, she is released, and then transformed back into human form, while she is begging to the Nile. I am using the myth of Io because there is an immense amount of imagery depicting this myth.

From Pinocchio, I am using the famous “Donkey scene”. In this scene, the children are turned into donkeys and sold as slaves as a punishment. It is a very powerful scene in the movie. I am using the lines from the movie, so the performers are saying the exact same lines as the different characters in the Disney-movie.

Both stories – the myth of Io and Pinocchio – are telling the age-old story of transformations of humans into animal-form. Both are classical metamorphosis-stories, as we also know them from Dr. Jekyll and Mr. Hyde, Kafka’s story of Gregor Samsa, as well as superhero-stories as Superman and the Hulk.

I am telling these two stories as one performance through the use of “staged images”. By this I mean static spatial arrangements of figures (performers) in specific gestures and positions. These positions are based on the classical imagery of the myth of Io.

Staging strategies for performance

Performance theatre is an area that almost by definition is experimental. Usually, but not always, it is fairly small productions with a relatively limited number of audiences. Therefore a lot of what happens in performance theatre can be seen as research into new ways of staging. This part of the essay will therefore also involve precedents for this type of work.

I am interested in using new technologies as a tool to create performances. This is in opposition to traditional theatre which uses new – and very old (!) – technologies as a way of enhancing performances. The traditional theatre clearly prioritizes the drama above all. This means that all tools, technical and otherwise, are there to enhance the drama, i.e. the text. Actors, music, sound, scenography etc. is only there if it helps to clarify or underscore the drama of the text.

I want to flatten this traditional hierarchy. I want to make all components of the performance of equal importance. The text should be at the same level as the performers. The performers should be at the same level as the light. The music should be at the same level as the scenography, etc.

For example, in a performance I can let the light guide the text, the music change the lines for the performers, objects direct the movement of the performers. Imagine this – admittedly silly – example: the follow spot of the traditional theatre becomes a guiding spot. The actors will have to run to be in the spotlight, and not vice versa. This would deeply change the dynamics of the performance, and it would be a fun and interesting experiment.

Technical setup of “Seven Forms”

The VR headsets are made using the Google Cardboard setup. The headset itself is made from Google’s original drawings of the Cardboard device, but altered to fit the aesthetics of the performance. Inside the Cardboard headset, the iPhone is placed. Using Google Cardboard’s SDK in Unity, I have made the Unity game into a VR-”game”.

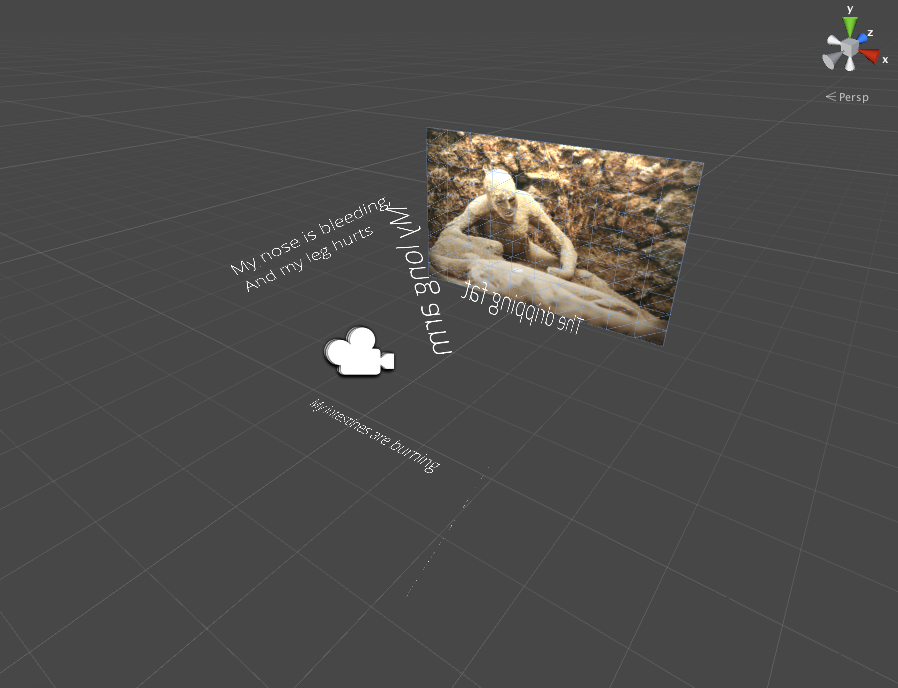

In Unity, I set up three different scenes, one for each performer. In these scenes, I place all the lines and the images in different places, surrounding the VR camera. This is done in order to make the performers look around for the lines. The idea is that they can not passively receive the lines, but they are forced to actively look around for them.

The complexity of the piece really comes from the ability to control the lines in the three headsets simultaneously. I needed this to be able to control the tempo of the piece, while the performers still would be kept in the tension of not knowing exactly when and where the next line would appear. I needed the ability to cue the performance, so that I, as the director and stage manager in one, could run the performance.

To solve this problem of cueing, I decided to use OSC signals as the triggering device. I chose this partly because I would be able to prototype it rather quickly using existing tools as TouchOSC (by Hexler). I also chose it because of the ability to set up a local network and trigger multiple devices simultaneously. I needed to not only trigger lines in all the three headsets, but also trigger the lamps that were run from an Openframeworks application running from my own Mac laptop.

I found an OSC plugin for Unity. To my surprise, it worked directly and without any big changes when I ran my own Unity app on my iPhone. The only problem with it is that the IP addresses can not change, so it is important to set it up correctly and statically when the apps are deployed onto the iPhones. So far, it has not turned out to be a major practical problem.

I am also controlling four theatrical lamps. These are controlled over DMX from my mac laptop, via a hardware interface, DMX USB Pro from Enttec. Using Kyle McDonald’s OfxDmx script and the Openframeworks plugin for OSC, OfxOSc, I wrote a simple controlling software. This software listens to OSC signals and converts it into a DMX signal. The DMX signals are in this case actually just strings of numbers that are output via a Serial connection to the Enttec hardware. It is this conversion that Kyle McDonald’s script does.

Rehearsals for MSII Final

Testing performer’s app

Testing with Terricka, Jane and Chao

First prototype of performer 1

First prototype of performer 1 is finally working.

I am cueing the lines through TouchOSC from my iPad on a local network to the iPhone, that is a VR app made in Unity.

Design opportunities in the technoscape

Notes from Post-Planetary Design class, March 29:

What design opportunities do we see in the technoscape to escape velocity narrative?

In relation to Vinge’s Rainbow’s End, Spike Jonze’s Her, Nick Land’s Meltdown, Stross’ Accelerando

Augmented reality: new sovereignties (re.: Benjamin Bratton) starting to exist with the cloud etc. – why is there different sovereignties for the AR and the physical/material world?

For example: the embassies in a foreign country, is governed by the laws of the country that the embassy is representing. It all comes back to the physical — everything comes back into the network of physical carriers (the body etc.)

Reality may not be as real as we think it is; or that the axioms may not be bounded by what we consider to be physical laws. A bit of elbow room for ontologies —

The isomorphism of one system and another (the example: turbulence observed in milk in a coffee cup and turbulence observed on the surface of Jupiter). Laminar flow.

The moment where turbulence makes place – the onset of turbulence – also happens in systems that do not look liquid at all.

Network capacity – communication networks – Is that a fundamental natural occurrence, where the internet is a part of that? Like funghi networks, mycelium etc. Does that mean that computational systems have evolved by itself in a way? Is it possible to say that natural networks and the internet network is isomorphically the same.

What if we take the anthropocentric out of the design and say: Humans were agents who participated in an emerging platform rather than Humans designed the internet.

What does intelligence mean? What are intelligent species? If intelligence is measured across lifespans —

But what constitutes agency?

Owning pieces of infrastructure, private infrastructure

Control of LED lamp through OSC

Controlling the RGB LED lamp through an Openframeworks app that is listening to OSC signals on a local network.

Using the ofxOSC addon and the ofxDmx addon by Kyle MacDonald.

Running TouchOsc by Hexler on the iPad.

Hardware interface for the Dmx control is the Enttec DMX USB Pro Mk2.

Thanks a lot to Dana Martens!!

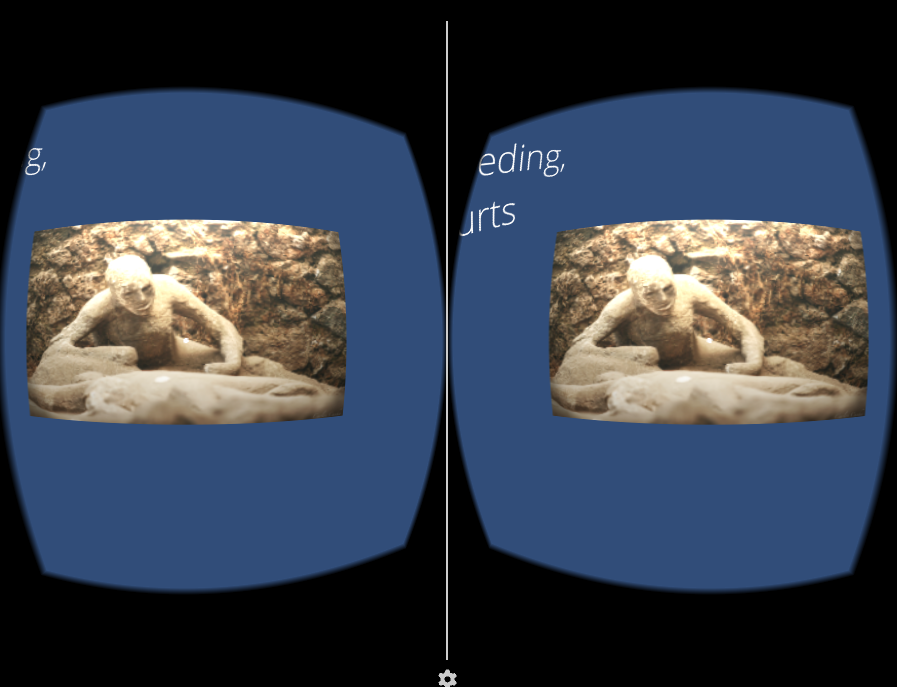

First scene in VR app

Some screenshots from the first scene, where one character dies.

This is the death scene with the lines from the dying person.

The performer must lie on the ground in the same position as the figure in the image in their headset.

The performer must then move his/her head in order to find the lines and read them. The performer decides the order of the lines.

Screenshots from actual app

Screenshots from Unity

Unity and TouchOSC works

I finally got the connection between TouchOSC and my app to work.

This is a key connection for my entire project: that the OSC signals can be received in the iphone apps that the performers will wear in their headsets.

This is made from the UnityOSC classes by Jorge Garcia Martin.

All assets from UnityOSC should be imported to the Unity file. The important thing is then to change the initialization of the IP addresses in the OSCHandler.init. This test is done over my own home wifi, but should be made on a local, ie. not connected to the internet, network. This should minimize the risk of fallouts, firewall blockings etc.

The IP address of the Host should be set to the iphone’s local IP address and should be changed from the sending part.