AR research + a new concept

I want to continue my concept about dissociative walks, but instead of using data generated from my own doing (or, walking), I want to get data from the outside environment I am walking through.

I thought Augmented Reality would mesh well with my concept because most AR apps provide stark contrasts between ‘reality’ (or whatever the plain phone camera is receiving as the outside), and the ‘augmentations’ that are added artificially. Pokemon Go is a perfect example.

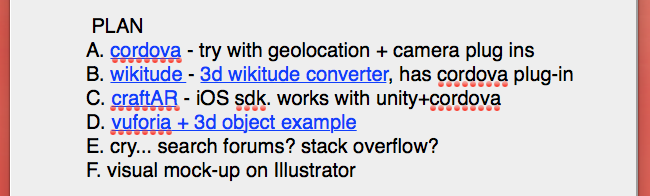

Many people are intimidated by mobile app development (including me), but the technical aspects I am envisioning seem well-documented. The first challenge will be researching the best tools to use. Here is my plan of attack:

Plan A

Cordova

This SDK feels like a good first step because you can program with Javascript rather than Swift or Objective C. There are ways to directly access geolocation and camera assets, so this could potentially be a one stop shop.

Plan B

Wikitude

This SDK has a Cordova plug-in so I would still be able to code in Javascript, but also robust documentation, and a tutorial that could lead to exactly what I’d like to do.

I only worry about adding 3D objects. I accepts only MAYA and Sketch-Up objects, so anything involving Unity could be a problem.

Plan C

CraftAR

An iOS sdk I know the least about, but apparently works with Unity + Cordova.

Plan D

Vuforia

This is simply a 3d object example, which could prove to be a good exercise in Unity and general mobile development, but doesn’t get to the heart of what I’d like to communicate. It would be something.

As of now,

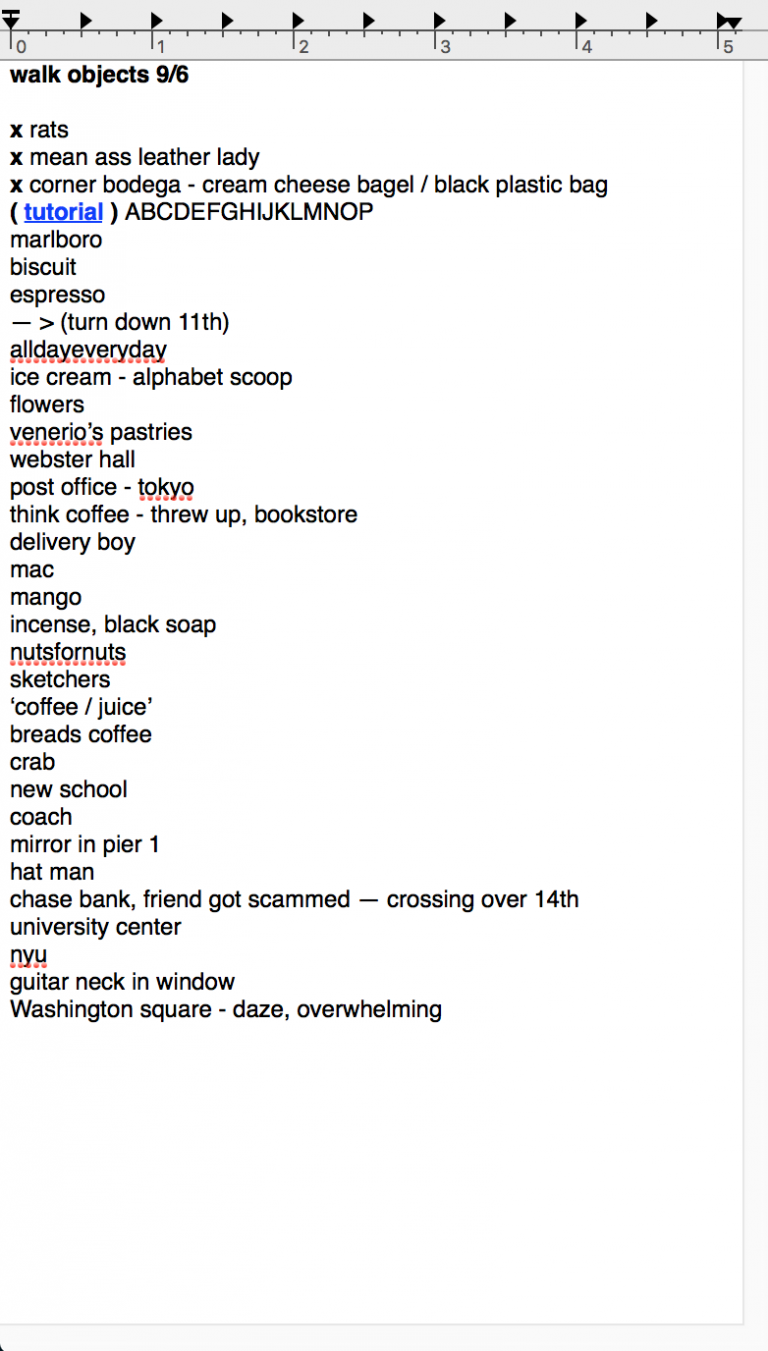

I have chosen a walk and listed any memorable moments or sights I can then turn into 3d objects to place along the path:

I will find free ones online, or create them fresh on MAYA. The next step will be to match these objects with geolocations.

I will find free ones online, or create them fresh on MAYA. The next step will be to match these objects with geolocations.