Sorry for the late post.

Before the Project:

1. Can a machine think? Can a machine have intelligence? Can a machine have consciousness or emotion? This set of questions is rather philosophical than technological, because after all, the answer will vary depending on different criteria for the definition of intelligence, consciousness, subjectivity, etc. However, the technological development in artificial intelligence are expanding our understanding of AI’s ability in different areas, as well as challenging and redefining the philosophical study in AI.

2. Some references regarding to what it entails for a machine to have intelligence.

a) Turing Test:

To past the Turing Test, a computer has to be able to imitate the characteristics of a real human being in order to smoothly converse with a human tester, who will eventually mistaken his/her conversation partner, the computer, as a real human.

b) The Chinese Room:

To prove the falsity of “Strong AI” – that the programs running on a computer enab the computer to behave intelligently, American philosopher John Searle proposed a scenario called the Chinese Room in the 1980s. A person who only speaks English is in a room with a pen, paper, and a set of rules to match the English vocabulary to the Chinese characters (like a dictionary). This person will be able to communicate with a Chinese-speaking person with written Chinese, even though he/her doesn’t understand Chinese. Thus, the computer doesn’t need to understand Chinese in order to translate; there are merely inputs, outputs, and the rules to convert the former to the later.

c)Godel, Escher, Bach: An Eternal Golden Braid (still reading some of the chapters from this book)

d)Comparing human intelligence and AI

In the 1960s, Allen Newell and Herbert Simon proposed that “symbol manipulation” is the common method of both human and machine intelligence. In addition, philosopher Hurber Dreyfus suggests: “The mind can be viewed as a device operating on bits of information according to formal rules.” (There are also other theories regarding to this topic, but this one is what I’m most interested in.)

3. As a critical component of AI, Computer Vision reaches a new stage after its flourishing development during the 90s and 00s. Now, CV is able to recognize not only a single object, but also the environment around it. For example, CV might detects: “a elephant is standing next to two people who are drinking water.” However, besides the abundant database of recognizing nouns, verbs and even adjectives, which are often more subjective, become the missing links in the interpretation from CV. Teaching CV to tell a story from an image, is the a crucial challenge. (Reference: a recent TED talk from Stanford Vision Lab. There are also some demos on their website.)

http://www.ted.com/talks/fei_fei_li_how_we_re_teaching_computers_to_understand_pictures?language=en

4. Many art and design projects are using CV as a tool, sometimes in superficial ways for cool visual effects. However, I want to explore the essence of CV, its potential in the future, and the philosophical and social implications behind it. This project that Justin sent to me, CV Dazzle, caught my attention because it examined our living condition under the environment surrounded by computer vision and therefore, the designer designed a make-up pattern that enables people to camouflage from being recognized by the computer.

http://ahprojects.com/projects/cv-dazzle/

The Project:

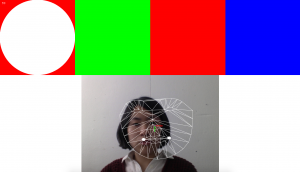

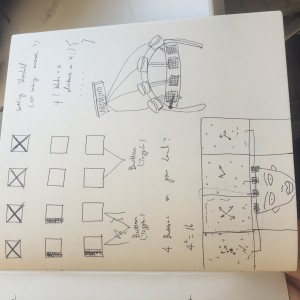

In this project, the color of an image (or from a web cam), as the most straightforward element to represent some emotions, will be detected by the color tracking add-on(s) from openFrameworks. Then, according to the percentage of the most used color, the original image will be deformed and recreated by the manipulation of meshes and the motion of different parts of the meshes. For example, a blue-dominant image’s meshes might be torn apart into broken pieces shaking against the black background, to represent a melancholic state of mind. This project is an idealistic representation of computer’s mind and emotion. It might over-simplifies the process of CV, but it is an optimistic expectation for the future of implementing CV in various areas in our life.

Technical Challenges:

Color tracking (and anaylizing? How do I read the color with the highest percentage of presence?)

ofMeshes (This tutorial will be what I am going for: http://openframeworks.cc/tutorials/graphics/generativemesh.html

)