Week 9-2 10-1 Augmented Reality in Processing

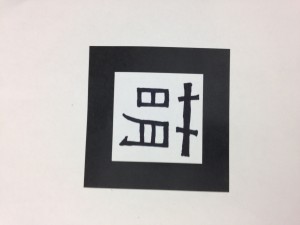

Last year I went to Dorkbot NYC and watched a talk from a NYU person introducing the augmented reality phone app that they were developing called Layar. Through the camera of the phone, the audience were able to see virtual objects coming to their surroundings and moving around them continuously. It was incredibly enjoyable to do something similar (but simpler) in our Core Lab. We tested a Japanese processing library that enables the camera on the computer to detect the “marker” on the paper, a patter we designed and generated with this Marker Generator. We have generated four “marker” files of different resolutions: 4×4, 8×8, 16×16 and 32×32. The camera detected the bold black border of the “marker” first and then recognize the pixel patterns inside.

In the following class, we expanded our knowledge of beginShape() and endShape() in processing by creating an ellipse with beginShape(Quad_Strips ). We further tested augmented reality with a potentiometer that controlled the visual effects around it.

Here is the video documentation:

Augmented Reality in Processing – Nyar4 Library Test from Yumeng Wang on Vimeo.

Leave a Reply