An Introduction to Psychoacoustics

This beginners guide is adapted from Paul Oomen’s article on the Redbull Music Academy website. Paul Oomen is a composer and founder of 4DSOUND – a company that develops innovative spatial sound technology and a creative laboratory for spatiality in music.

Ultimately, all sound that we perceive is psychoacoustic. As soon as sound passes through the ears, it stops being a physical phenomena and becomes a matter of perception. What we hear is almost by rule different from what is actually sounding, due to the peculiarities and limitations of our hearing. And what we hear can largely differ from what we think we are listening to, due to the many tricks that perception plays on our awareness.

Continuum of Tones and Beats

The awareness of psychoacoustics, and the possibility to apply psychoacoustics creatively in music, took a large jump with the development of electronic music in the early ‘50s. Electronic sound enabled artists to explore a much larger spectrum of sound than was ever possible with acoustical instruments. Electronic sound also goes far beyond the limits of human hearing which is generally set between 16 Hz and 20 kHz (decreasing to 16 kHz with age).

Karlheinz Stockhausen created a pivotal moment in the history of music with his work Kontakte (1958-1960). According to the composer, “In the preparatory work for my composition Kontakte, I found, for the first time, ways to bring all properties of sound [i.e. timbre, pitch, intensity and duration] under a single control.” The most famous moment, at 17:03 minutes, is a potent illustration of these connections. A high, bright tone descends in several waves, becoming louder as it gradually acquires a snarling timbre, and finally passes below the point where it can be heard any longer as a pitch. As it crosses this threshold, it becomes evident that the tone consists of pulses, which continue to slow until they become a steady beat.

This famous descending tone uncovers a fundamental understanding of our hearing. Once a tone passes below the threshold of 16 Hz we stop perceiving tone, and start to hear beats. The range of hearing was never explored in this way before this moment, because there was no instrument that could perform this frequency range. Up until that time, beats and tones were considered separate musical properties – and often they still are. Beats belong to the realm of rhythm and tempo, and tones to melody and harmony. With Kontakte, Stockhausen showed that beats and tones form a continuum, and the distinction between them is an illusion. It is exclusively due to the lower threshold in our hearing, whether we perceive sound as beats or tone.

Once a tone passes below the threshold of 16 Hz we stop perceiving tone, and start to hear beats.

Harmonics and Difference Tones

The continuity of beats and tones reveals itself once more when we explore the world of harmonics and subharmonics. If two or more tones have a harmonic relation (i.e. they relate to a common fundamental tone 2F, 3F, 4F, 5F, etc.) they produce a spectrum of overtones that completes this harmonic series. A harmonic spectrum is an acoustical phenomena that can be measured, but in most cases it is not perceived. What we perceive instead is a specific timbre, the color of the tone, that is very hard to distinguish as separate from the actual tone.

A powerful example of making overtones and undertones in the spectrum explicitly heard is the example of modern overtone singing by vocalists Mark van Tongeren and Rollin Rachele.

At first, the two singers tune their individual tones to a harmonic relation F11 and F12 (corresponding roughly to pitches E+ and F#-). Once they sing together, an exploration of many different overtones in their shared spectrum can be heard. Besides the steady tones of the two male voices, one on your right ear and one on the left ear, a third high melody can be observed right in the middle of the ears. This flute-like melody doesn’t seem to belong to either of the two voices, but is perceived as a separate entity, a phantom voice.

On the low side of the spectrum we perceive yet another phenomena which is the fundamental F1, equal to the difference tone between the two voices. The difference between tones becomes clearly audible if it is smaller than 16 Hz and passes the lower threshold of hearing. Therefore the voices also evoke a clearly audible beating (which is an infra low B that equals 15 Hz).

Binaural Beats

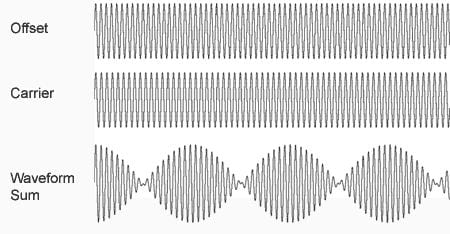

A similar perception of the difference tone appears as a related phenomena called binaural beats. These beats are an artifact of two tones at slightly different frequencies (a difference smaller than 30 Hz). The two tones are presented separately to each of the listener’s ears using stereo headphones. A remarkable detail is that the beats do not exist as acoustical phenomena in this case. Instead, they result from brainwave encoding of the difference in frequency of each tone.

In this example a 300 Hz is played in one ear and 306 Hz in the other, therefore the binaural beats have a frequency of 6 Hz.

Binaural Beats

Phantom Voices

The appearance of a phantom voice can be traced to the Eastern ritual of the Cuncordo de Castelsardo, a brotherhood on the Island of Sardinia. A choir of four brothers evoke a fifth voice, the quintina. This higher female voice is said to be the spirit of the Holy Virgin Mary.

As Mark van Tongeren recently wrote in his PhD, “At a certain moment my attention was distracted by a female voice that joined the choir. I instantly turned around, because the voice did not come from them, but sounded behind me. Of course there was no woman in sight. Flabbergasted I turned back my head and focused on the four singing brothers. I concluded that this very real voice must have come from them. She arose from the fusion of their four voices. After about 18 years of experience with overtone singing and listening experiments all over the world I thought to have a blind faith in my ears. Until I turned my head in an impulse, and for that moment really believed hearing this female voice and I wanted to see her.”

You can hear an example of the Quintina in the example Te Deum to the right

The quintina is a phantom voice that significantly differs from the above example of overtone singing. In this case, the effect is a coherent female voice, and there is no trace of overtone singing. Vocalist and ethnomusicologist Mark van Tongeren, who witnessed and recorded the ritual, has tried to reproduce the quintina with singers from his vocal ensemble Paraphonia and has travelled to several other similar brotherhoods on Sardinia to find the quintina. But he could never find the phenomena anywhere else, except for this specific place with this brotherhood.

My suspicion is that the appearance of the quintina resides in the very strongly belted vowels of the words sung by the brothers, combined with specific attributes of the acoustics of their church. There is a direct connection between the spoken vowels and particular resonances in the voice that correspond to bands in the overtone scale. A strong emphasis on those vowels while singing could evoke certain higher tones of the voice to become more clearly perceived, under the right acoustical circumstances.

To further explain, Mark van Tongeren demonstrates in the example to the right how vowels correspond to the resonant spectrum of the voice and which overtones are to be found there.

Miserere Funebre (edit)

Time Differences

Instead of one voice perceived as the composite of many voices, like the quintina, one voice might be perceived as decomposed into many voices. A classic example is Steve Reich’s 1966 tape piece “Come Out” where a single speech recording is unraveled step by step into individual noises and beats. To achieve this, Reich applies a constant shifting of interaural time differences: differences in phase of the sound between the left and the right ear. In the 1960s, this was simply achieved by changing the individual speeds of the left and right tape loops.

You can hear Come Out in the video to the right

As an introduction we hear the original voice recording in balanced stereo. “I had to, like open the bruise up and let some of the blues blood come out to show them.” Then a sample of this voice is looped and starts to phase slowly from the right to the left ear. “Come out to show them.” The phase difference increases until the voice appears to split up in two distinguishable voices on the left and the right.

In the next chapter Reich plays back the double voice, and repeats exactly the same method as before, now phasing the double voice from left to right and then increasing the phase difference. Now the two voices soon become four voices, but instead of perceiving four voices the individual vowels and consonants seem to detach from the voice and can be perceived as individual beats and noises that have their own rhythms of four, shifting from left to right in different timings. It becomes increasingly harder to perceive the sound as speech.

As a coda, Reich plays the process once more, now with the speech sample shorted to “out to show” and a double of the double voice. The voice is transformed into noise bands that form continuous shifting lines of eight beats that cannot be composed back to the root source of the sound, the speaking voice.

A fundamental psycho-acoustic effect that underlies the entire process of “Come Out” is varying the arrival time of the sound in the left and the right ear. If the arrival time is shorter in one ear, we perceive the sound to be localized on that side. As long as the phase difference is small enough, the apparent location of the sound source appears to move. When the phase difference is exaggerated by more than, say, 200 milliseconds, the correlation of the sound between the two ears dissolves and two individual sound sources are perceived.

Listen to the sample to the right to hear an example of the Time Difference psychoacoustic effect. The audio begins in phase, gets increasingly out of phase and returns to in-phase.

Time Difference

Binaural Hearing

The phase differences between the two ears is one of the aspects that make up our binaural hearing. Localization of sound originates in the fact that the ears are some distance apart and, as such, the brain always registers slight differences in phase, intensity and spectrum of the sound striking each ear.

In “Gwely Mernans” from Aphex Twin’s Drukqs, we can hear low beatings and high tones wavering around the head by use of shifting time and level differences between the two ears.

There is definitely more happening with the sound than just panning between the left and right ear. If you listen to “Gwely Mernans” in an anechoic chamber with frontal stereo setup, the beats actually move clearly in circles around you, left and right as well as in front and behind you, without any speakers being placed there. The localization of a sound source in front or behind the listener, as well as above and beneath the listener, is not a matter of the left and right division of the ears, but due to complex filtering of the sound as a result of angle reflections in the ear’s auricle, and the way the sound bounces off the shoulders.

Even if the sound waves are projected from in front, the brain could render the sound as coming from behind if the right filtering is applied. In practice, this filtering is very complicated to achieve and remains a highly unstable effect, because no pair of ears have the same shape. Everyone learns to render the 3-dimensional localization of sound based on the individual shape of their ears, thus no formula can achieve a definite effect for every listener.

Therefore the binaural illusion might not be apparent for everybody listening to this track, but the use of good-quality closed headphones or playback in an anechoic chamber will nevertheless increase the chance of perceiving the full binaural illusion.

Monaural Cancellation

An interesting concept that takes the binaural nature of sound perception to the extreme is Ryoji Ikeda’s “Data.Googolplex.” This sound piece consists in large part of two symmetrical inverse waveforms presented to the left and the right ear.

Upon listening in headphones or on stereo speakers, the piece will in fact appear to be in mono. But the psychoacoustic secret hidden in “Data.Googolplex” is that if the two waveforms are actually played in mono, it results in a complete cancellation of the sound. If sound waves are exactly in phase, they add up to a wave with double amplitude. But if waves are symmetrically out of phase, they cancel each other out, as the addition of the high curve of the wave with an equally low curve of the wave results in zero wave.

Interestingly, the brain does not distinguish between the high and low curve of a wave, it encodes sound waves equally in both directions and it is only the amplitude (depth) of the wave that matters for perception. Hence, the perception of “Data.Googolplex” is perfectly mono, and the binaural division of the waveforms is entirely virtual.

This brings back the initial question: Where does sound actually exist? In a virtual code, as physical waves in space, in waves encoded by the brain, or as an idea, a memory or fantasy? Each of the above examples requires a different answer: it resides sometimes here, sometimes there, maybe everywhere at once or nowhere at all. This question is not only an exotic feature of the peculiar examples presented, but underlies the perception of all sound and music we encounter.